Introduction

We’ve spent the last two years building a program that incubates startups at the intersection of quantum computing and machine learning. It has been an incredible experience; we have had the privilege of working with some of the brightest minds in the field, and some of the most inspiring entrepreneurs who are investing years of their lives to make quantum computers that can solve real world problems a reality.

As part of this effort we have had to explain quantum computing to hundreds of people, in some cases, multiple times a day. This post explains the basics and the state of the industry today.

The Basics

Quantum computing is the attempt to harness the laws of quantum mechanics to perform computational tasks which would otherwise be intractable (i.e. take even very large classical computers the age of the universe to solve). Modern day digital computers encode data into strings of binary bits which can only be in one of two definite states: 0 or 1. By contrast, a quantum computer uses qubits, which can represent a one, a zero, or any probabilistic combination between these two states allowing for an infinite set of states. This enhanced structure allows for richer possibilities in the way information can be stored and processed. This creates the possibility of solving traditionally difficult problems by approaching them with a new computational paradigm.

How it Works

Quantum computing leverages the ability for qubits to exist in a state of multiple possibilities at once, a phenomenon referred to as superposition. Individual qubits can be linked together in a way such that the measurement of one qubit’s quantum state influences the possible quantum states of the other – known as entanglement. When qubits are entangled, the quantum information that they store is no longer localized to the qubits individually, rather it is spread across them (i.e. it is stored in the correlations between them) allowing them to work together in parallel to solve complex problems.

The capabilities of what one can do with a quantum computer are correlated to and constrained by the number and quality of qubits that exist within a system. These qubits can be made of controlled atoms, electrons, photons (particles of light), or other engineered systems designed to act quantum mechanically. This requires a very high degree of isolation and control in the way one can maintain and manipulate them, as any environmental disturbance can cause them to fall out of their quantum state (decohere). Consequently, depending on the specific technology, some of these machines are built in supercooled temperatures to reduce thermal noise allowing qubits to remain in a quantum state (remain coherent) for a longer period of time.

Available Hardware

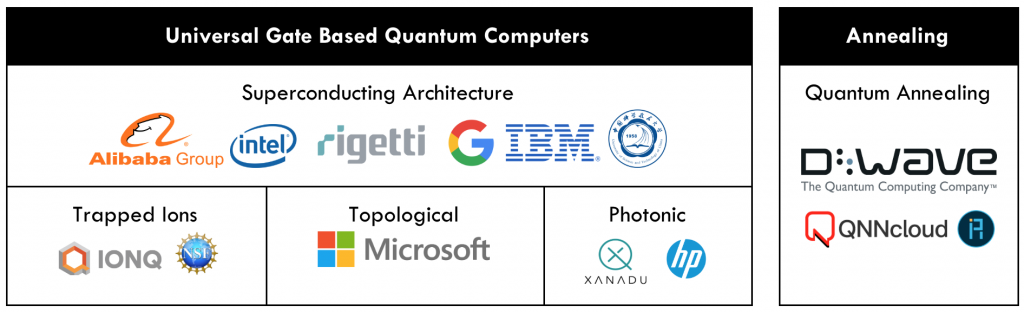

There are two different approaches to quantum computing (1) universal gate-based and (2) quantum annealing. The table above shows companies developing different hardware architectures within these approaches. The differentiating factor between the two is the process of how they come to a result. In the gate model approach, quantum information (stored in qubits) follows sequential instructions – step by step – to achieve the desired result. These systems are seen as the general purpose quantum computer. With quantum annealing one has to translate a problem into an “energy landscape”, which is encoded into the parameters of the qubits and the interactions between them, and the system finds the lowest optimal point. Imagine after a rainfall a landscape with valleys, mountains, and basins, water will run to the lowest points of the basins. This is similar to the way quantum annealing works in finding those lowest points, with the benefit that the quantum state of the annealer can “tunnel” across barriers in the landscape, allowing the system to seek out the global minimum. These types of systems are designed to solve specific optimization and probabilistic sampling problems.

Where the Industry is Headed

Over the past two years, the field of quantum computing has undergone a shift from an emphasis on academic research and lab experiments to private companies designing and experimenting with larger quantum systems. With only a handful of algorithms mathematically proven where a quantum computer would clearly have an edge over the respective classical solution, at the current stage of hardware development no concrete quantum advantage or supremacy has been demonstrated. This leads to two questions (1) when will a system that can solve real world problems be available and (2) what are the near-term applications of the next two generations of architectures?

To answer the first question, there is a race to see who will be the first to build a system with commercially relevant computing capabilities and the expected time frame for completion is uncertain (experts quote anywhere between three to fifteen years). As mentioned earlier, there are two different approaches of quantum computing being pursued. The first, known as gate-model quantum computing, is being pursued by most players in the field, with current qubit counts as follows: IBM – 50 qubits, Google – 72 qubits, Rigetti – 19 qubits, and Intel – 49 qubits. The other approach, quantum annealing, generally is easier to scale but faces its own hardware-imposed limitations. D-wave System, the leading hardware developer in quantum annealing, currently has an available system with 2048 qubits. As these systems scale within both categories it is important to note that the number of qubits is not the only measure of progress or processing power, because not all qubits are created equal. An infrastructure that allows qubits to have low error rates and high connectivity between them can be far superior than a system that has more qubits without these characteristics.

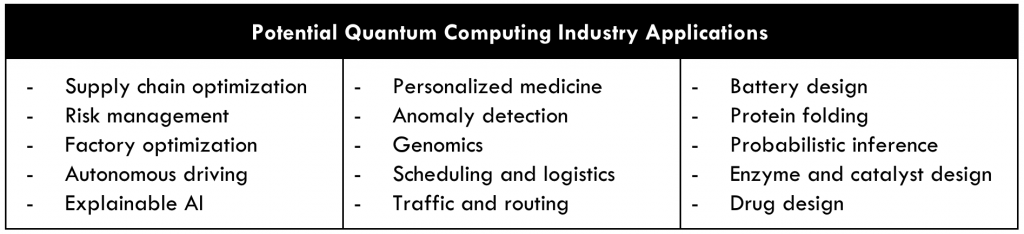

Despite the limitations of current systems, there exist hybrid quantum-classical algorithms, which aim to use the best of both quantum and classical computing and that can function on current and near-term devices. These techniques are well suited to problems in optimization, probabilistic machine learning, as well as chemical and materials simulation.

Final Thoughts

The excitement for quantum computing stems from its potential to qualitatively expand what computational problems we are capable of solving, allowing us to find solutions to important but hard and complex problems that are intractable with modern classical computing. While these are early days, the industry is now in a critical and accelerating period in which the coming years will determine its commercial success or failure. Over the next five years, the industry will see major advancements in quantum hardware and software infrastructures. The promise is that this technology may one day have a transformative effect in the ways we understand and deal with complex systems, with applications ranging from portfolio-management and traffic optimization to personalized healthcare and drug design.

About the Authors*:

Eric Brown is a Venture Manager in the Quantum Machine Learning Stream and leads the Quantum Solutions Initiative at the Creative Destruction Lab. Eric has a PhD in Quantum Information from the University of Waterloo, and had spent two years as a postdoctoral fellow at the Institute of Photonic Sciences in Barcelona.

Ani Chemilian is a Venture Manager in the Quantum Machine Learning Stream and manages the High School Girls Program at the Creative Destruction Lab. She is a graduate from Rotman Commerce at the University of Toronto and holds an Associate Diploma (ARCT) in piano performance from the Royal Conservatory of Music.

*Thank you to Peter Wittek and Daniel Mulet for their input, feedback, and context on the state of the quantum computing industry.

About the Creative Destruction Lab Quantum Machine Learning Program:

The Creative Destruction Lab (CDL) based in the Rotman School of Management launched the Quantum Machine Learning (QML) Incubator Stream in 2017. This eleven-month program brings together entrepreneurs, investors, leading quantum information researchers, and technology partners such as D-Wave Systems, Rigetti Computing, and Xanadu to create new ventures at the intersection of quantum computing and machine learning. Participants receive US$80K in equity investment from Bloomberg Beta, Data Collective, and Spectrum28, office space in downtown Toronto, and intensive technical training from industry and academic leaders in quantum computing and machine learning.

The pace of development in quantum computing mirrors the rapid advances made in machine learning and artificial intelligence. It is natural to ask whether quantum technologies could boost learning algorithms: this field of inquiry is called quantum-enhanced machine learning. Peter Wittek, Academic Director of the CDL Quantum Machine Learning program, is launching a course to show what benefits current and future quantum technologies can provide to machine learning, focusing on algorithms that are challenging with classical digital computers. They have put a strong emphasis on implementing the protocols, using open source frameworks in Python. Prominent researchers in the field will give guest lectures to provide extra depth to each major topic. These guest lecturers include Alán Aspuru-Guzik, Seth Lloyd, Roger Melko, and Maria Schuld.